Abstraction via Repetition

In a fastAI class, I asked the following:

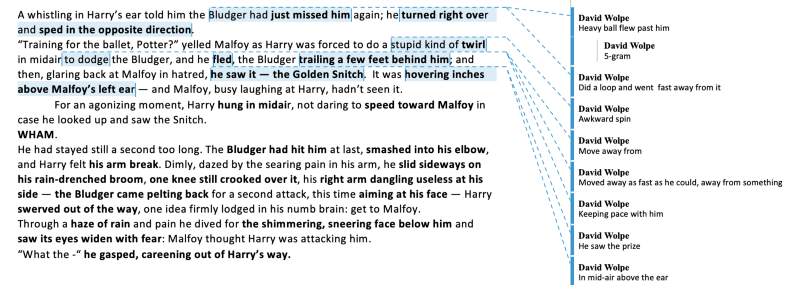

Do any language models attempt to provide meaning? For instance, “I’m going to the store” is the opposite of “I’m not going to the store.” Or “I barely understand this stuff” and “That ball came so close to my ear I heard it whistle” both contain the idea of something almost happening, being right on the border. Is there a way to indicate this kind of subtlety in a language model?”

And the answer came back:

Yeah, absolutely our language model will have all of that in it, or hopefully it will have it, or learn about it. We don’t have to program that. The whole point of machine learning is it learns it for itself, but when it sees a sentence like ‘Hey careful that ball nearly hit me,’ the expectation of what word is going to happen next is going to be different to the sentence, ‘Hey that ball hit me!” So, so yeah, language models generally you see in practice tend to get really good at understanding all of these nuances of, of English or whatever language it’s learning about.

Let’s take a closer look:

WHAM.

He had stayed still a second too long. The Bludger had hit him at last, smashed into his elbow, and Harry felt his arm break.

It is possible to extract sense from the above by doing a kind of simultaneous transation from words to meanings. Meanings expressed, that is, by other words. Notice that it isn’t a one- or two- or n-word regular affair. Meanings are contained in variable-length strings; sometimes the meaning can be provided by a single word; sometimes it will require more. The general rule of thumb is: what would it take to explain to another human being?

Automated scan-labeling is what we’re after. Scanning over various-length ideas, mathematically represented, also multi-labeled. It’s like a bear’s eye, a bear’s pupil, a bear’s angular contrast diagonal (at a low level); a bear’s fur, a bear’s head, etc. An enumeration of all the properties of a bear, adding up to a bear. But this is done via averaging, via approximation. Recurrent averaging is what allows the process of machine generalization in the first place.

What follows are some speculative takes on related concepts.

Plunging and Control

He plunged downward. Downward — directional, with gravity (z-axis)

Plunged. Likely implies ‘without control.’ ‘Fell is without control. ‘Dived’ suggests control. ‘hurtled’ could be either. ‘Streaked’ usually (not always) suggests control. Control/intention, intent.

Loss of control, accident, helpless.

Few-shot. So if we provide 30 examples of excess or overflow, would it be able to recognize it elsewhere?

Overwhelmed

He had waited a moment too long

He’d left the water running and it had spilled onto the floor

I’m overloaded

I’m exhausted (ie I’ve overstepped my energy limits)

I passed him on the fourth lap (exceeded)

Beyond all expectations

I did too much

I overdid it

“Yes!” he blurted out. It was not his place to speak. A stunned silence fell over the room.

swamped

buried

inundated

I’m older than he is

He’s a megalomaniac

You’re too old!

You’ve really gone above and beyond

You broke the record!

Numericize the following, which are both concepts drawn from words, and words themselves. What we are interested in is whether the abstracted concepts can be numericized :

1 = overflow

2 = speech

3 = physical effort

4 = distance (measured within space)

5 = time

6 = speed

7 = certainty

8 = doubt (any question has doubt (needs to resolve). This is a reason not to remove punctuation marks from text corpora. For instance “Is this any reason to doubt his word? I think not” would otherwise become “is this any reason to doubt his word I think not.” We can understand it, but we are then filling in the meaning that has been removed.

9 = need/requirement

10 = desire (want, crave, etc.)

11 = internal personal state(dreamed, thought, loved, cried, laughed, imagination) Actually it’s just personal state, with multilabel potential. Cried is both internal and externally visible. So the general category is ‘human’

13 = positive

14 = negative

Doubt is a subset of lacking. Physical is a scoop missing, an incompleteness. Musical corollary would suggests a dominant seventh chord (needs to resolve). A G7 chord in blues in the key of C.

And then how about addition and subtraction a la word2vec?

Thought + sleep = dream

Space + measurement device = distance

Movement through space from point A to point B = distance

Space + ruler = distance (or is it space * ruler = distance?)